Machine learning has been gaining importance in recent years and is rising exponentially, but it is taking time for the hardware development to match with the demands of these power-consuming algorithms. Every major tech company is developing Chips for artificial intelligence.

Though manufacturers have tried their best and also succeeded in making the hardware lighter and faster, there are still continuous improvements being made in the Chip technology.

More than 100 companies are working on the development of next-gen hardware and chips that can match the sophisticated algorithm capabilities.

These Chips can enable deep learning applications on phones and other edge computing gadgets.

Artificial Intelligence Chips –

1. Intel’s Nervana

Intel of late revealed new details of upcoming high-performance AI accelerators: Intel Nervana, neural network processors. It is built to emphasize two primary real-world considerations: training a network very quickly and able to do in a particular power budget.

This processor is built with flexibility and maintaining a perfect balance between performance, computing, and memory.

2. AMD Radeon Instinct

It is a Superior Training Accelerator for machine intelligence and deep learning

Developed with sophisticated “VEGA” graphics architecture made to handle large data sets and varied compute workloads.

Capable of 24.6 TFLOPS of FP16 highest compute performance for deep learning applications.

3. Samsung Exynos 9

Samsung’s Exynos 9820 has a distinct hardware AI-accelerator, or NPU, which does AI-related jobs almost seven times faster than it’s predecessor.

It is targeted at AI-related processing that will be done directly on the gadget instead of moving the task to a server, giving a faster performance and better security.

4. Nokia Reefshark

Reefshark – A unique and new chipset that helps in easing 5G network roll-out. AI is implemented in the design of the chip for radio and embedded in the baseband to use augmented deep learning to trigger smart, the swift response by the autonomous, cognitive network, which will help in enhancing network optimization and expanding business opportunities.

5. Apple A13 Bionic

This processor by Apple is used in it’s iPhone 11. Apple says that it’s the fastest processor by the company.

This chip features an Apple-designed 64-bit ARMv8.3- CPU with six-cores, where two high-performance cores are running at 2.65 GHz. The 2 supercharged cores are 20% faster, with a 30% reduction in power utilization, the four highly efficient cores are 20% faster with a 40% reduction in power usage.

6. Google Edge TPU

This chip by Google is purpose-built to run AI at the Edge. It delivers great performance in a small physical and power footprint, allowing the placement of highly precise AI at the edge.

Edge TPU merges custom hardware, open software, futuristic AI algorithms to give high-quality, easy to position AI solutions for the edge.

7. Graphcore GPU

Graphcore’s Intelligence Processing Unit is fully different from GPU and CPU’s, which are available today.

It is a very flexible, handy, parallel processor that has been designed thoroughly to deliver advanced performance on present machine intelligence models for both reasoning and training.

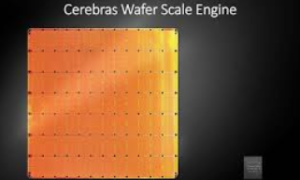

8. Cerebras (AI Chip) Wafer Scale Engine

Manufacturers are racing towards making thinner, smaller, and affordable chips, but here, Cerebras has released a wafer-scale engine, 215mm x 215mm chip targeted towards deep learning applications.

It has 1.2 trillion transistors, packed onto a single side with 400,000 AI-optimised cores, connected by a 100Pbit/s interconnect. These cores are supplied with 18 GB of super-fast, on-chip memory, with an unrivaled 9 PB/s of memory bandwidth.

9. Huawei Ascend 910

Ascend 910 is a new AI processor that is part of the Huawei’s series of Ascend-Max chipset group. After a long time of continuous development, test results now show that the Ascend processor delivers on its planned performance goals with very low power usage than initially stated and arranged.

10. Alibaba Pingtouge Hanguang

Alibaba made public its first AI dedicated processor (cloud-based) for large-scale AI inferencing.

This 12-nm Hanguang 800 consists of 17 billion transistors, and it is 15 times more potent than the NVIDIA T4 GPU, and nearly 46 times more potent than the NVIDIA P4 GPU.

Conclusion

The uses of AI are already everywhere around today. From a general consumer to big business applications. With the extraordinary rise of connected gadgets, combined with an interest in privacy, low latency, and bandwidth limitations, the hardware equipment utilized for preparing AI models needs to have that additional edge.

Love watching sunset every night !

“This website really has all of the info I wanted about this subject and didn’t know who to ask.”

If some one needs to be updated with most recent technologies then he must be visit this site

and be up to date all the time.

Thanks A Lot Kelvin For Your Appreciation

You should be a part of a contest for one of the greatest sites online.

I’m going to highly recommend this web site!

Great goods from you, man. I have understand your stuff previous to and you’re just too great.

I actually like what you have acquired here, certainly

like what you are saying and the way in which you say it.

You make it enjoyable and you still care for to keep it

wise. I can not wait to read far more from you.

This is really a tremendous site.

Hi there i am kavin, its my first time to commenting anywhere,

when i read this piece of writing i thought i could also make comment due to this good piece of

writing.

I’m not that much of a online reader to be honest but your

sites really nice, keep it up! I’ll go ahead and bookmark your site to

come back down the road. Cheers

Great web site. A lot of useful information here. I am sending it to several

friends ans also sharing in delicious. And certainly, thank you on your

sweat!

Thanks A Lot.

I do consider all of the ideas you have introduced for your post.

They’re really convincing and will certainly work. Nonetheless, the posts are very short for newbies.

May you please extend them a bit from next time? Thanks for

the post.

Thanks. Sure, It will Be a long Post Next Time

With havin so much written content do you ever run into any issues of plagorism or copyright infringement?

My site has a lot of exclusive content I’ve either

created myself or outsourced but it appears

a lot of it is popping it up all over the internet without my permission. Do you know any techniques to help

reduce content from being stolen? I’d certainly

appreciate it.

You can just report to Google for Plagiarism here on The Google Site – support.google.com/legal/troubleshooter/1114905?hl=en-GB

Thanks for the good writeup. It in truth was a entertainment account it.

Glance advanced to far introduced agreeable from you!

By the way, how can we communicate?

Thank You For Appreciation. You can Reach out to us – info@nextotech.com

Greetings! This is my first visit to your blog! We are a group of

volunteers and starting a new project in a community in the same niche.

Your blog provided us beneficial information to work on. You have done a marvellous job!

Thank You. Please Keep Reading.

Greetings! I know this is somewhat off topic but I was wondering which blog

platform are you using for this website? I’m getting sick and tired of

Wordpress because I’ve had problems with hackers and I’m looking

at options for another platform. I would be great

if you could point me in the direction of a good platform.

You can Try Wix Or Squarespace.

Greetings from California! I’m bored at work so I decided to browse your website

on my iphone during lunch break. I really like the knowledge you provide here and can’t wait to take a look when I get home.

I’m shocked at how quick your blog loaded on my cell phone

.. I’m not even using WIFI, just 3G .. Anyways, great blog!

Thanks For Reading Our Blog.

Hi! This is my first comment here so I just wanted to give a quick shout out and tell you I truly

enjoy reading your blog posts. Can you recommend any other blogs/websites/forums that deal

with the same subjects? Thanks a lot!

Thanks A Lot

Thanks A Lot For Reading The Article.

Thanks For The Appreciation

I will right away take hold of your rss as I can’t

find your email subscription hyperlink or e-newsletter service.

Do you have any? Kindly allow me know so that I may subscribe.

Thanks.

I appreciate you sharing this article.Much thanks again. Much obliged.

There is clearly a bundle to realize about this. I feel you made various nice

points in features also.